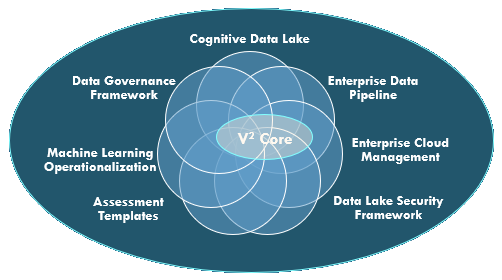

Vsquare Frameworks and Accelerators

We have created a rich library of reusable components and frameworks which accelerate the implementations and helps in realization of business value very close to the inception of the ‘Data Driven’ program. All these frameworks are based on our V2 Core engine which is a ready-to-use base library which facilitates application development with minimum amount of code.

- Cognitive Data Lake

This is a generic implementation of Data Lake with Ontological mapping. The framework creates a subject area-wise mapping of the source data with the pre-defined Ontologies. Once the data starts flowing into the Data Lake, a Cognitive layer is built for a natural language interface to the data objects within the enterprise. We have already built all the basic components and require minimum configuration and Subject Matter Expertise from clients in fully configuring the Cognitive Data Lake.

- Enterprise Data Pipeline

In a typical Big Data implementation, there are common patters in Data Ingestion, Processing and Advanced Analytics. We have built accelerators for building these patterns for the new implementations to be quick and cost effective. Enterprise Data Pipeline will enable enterprises to on-board their data in data lake (both cloud and on premise) and derive meaningful insights form it. EDP will have a unique data ingestion, data processing, basic data quality checks/data reconciliation, auditing, logging, alerting and operational reporting.

Data Ingestion - Data Ingestion: A unique configuration driven framework to ingest data from various sources and store it in the desired location and expose data to users for analytical purposes. Framework should have capability to ingest data in Real Time, Near Real Time, Micro batch and Batch mode.

Supported Sources - Any RDBMS / Any File (CSV, PSV, XMLs etc) / Streaming Sources

Supported Destinations - HDFS / S3 / GCS / Storage Blob

Support for Seamless Data Processing

Basic quality checks/reconciliation

Auditing and Logging

Alerting

Operational Reporting

- Enterprise Cloud Management

Enterprise are moving towards cloud for simplest reasons of flexibility achieved through cloud automaton and API supports. Most of the cloud providers provide individual component APIs like in Google cloud you have APIs to create organizations, folders and projects. You can set up users and IAM policies through those APIs. However, when Enterprise decide to adopt google cloud there are lot of work that are required from to be done by Enterprise. Like first steps towards setting up any project in Google cloud you start with setting up your organization resources(department/sub-departments). Then you start with setting up with projects which contains users with different IAM policies and roles. Refer one of the sample diagrams for Google resource hierarchy.

- Data Lake Security Framework

- Assessment Templates

- Machine Learning Operationalization

- Data Governance Framework